Breeding

the Evolutionary:

Interactive Emergence in Art and Education

By Dan Collins

This

paper first given at the 4th Annual Digital Arts Symposium: Neural Net{work}, University of Arizona,

Tucson, AZ April 11 – 12, 2002

------------------------------------------------------------------

Learning is not a

process of accumulation of representations of the environment; it is a

continuous process of transformation of behavior…

--Humberto Maturana (1980)[1]

Art is not the

most precious manifestation of life.

Art has not the celestial and universal value that people like to

attribute to it. Life is far more

interesting.

--Tristan Tzara,

“Lecture on Dada” (1922)[2]

------------------------------------------------------------------

INTRODUCTION

In August 2000, researchers at

Brandeis University made headlines when they announced the development of a

computerized system that would automatically generate a set of tiny robots—very

nearly without human intervention.

“Robots Beget More Robots?,” asked the New York Times somewhat

skeptically on its front page. Dubbed the Golem project (Genetically Organized Lifelike

Electro Mechanics) by its creators, this was the first time that robots had

been designed by a computer and robotically fabricated. While machines making machines is

interesting in and of itself, the project went one step further: the robot offspring were “bred” for

particular tasks. Computer scientists Jordan Pollack

and colleague Hod Lipson had developed a set

of artificial life algorithms—evolutionary instruction sets—that allowed them

to “evolve” a collection of “physical locomoting machines” capable of goal

oriented behavior.[3]

The Golem project is just one

example of a whole new category of computer-based, creative research that seeks

to mimic--somewhat ironically given its dependence on machines--the

evolutionary processes normally associated with the natural world. Shunning fixed conditions and idealized

final states, the research is characterized by an interest in constant

evolutionary change, emergent behaviors, and a more fluid and active

involvement on the part of the user.

The research suggests a new and

evolving role for artists and designers working in computer aided design

process and interactive media, as well as an expanded definition of the

user/audience. Instead of exerting

total control over the process and product where “choices” are made with

respect to every aspect of the creative process, the task of the

artist/researcher becomes one of interacting effectively with machine-based

systems in ways that supercharge the investigative process. Collaboration is

encouraged: projects are often structured enabling others—fellow artists,

researchers, audience members—to interact with the work and further the

dialogue. While the processes often

depend on relatively simple sets of rules, the “product” of this work is

complex, open-ended, and subject to change depending on the user(s), the data,

and the context.

A healthy mix of interdisciplinary

research investigating principles of interaction, computational evolution, and

so-called emergent behaviors inform and deepen the work. Artists are finding new partners in fields

as diverse as educational technology, computer science, and biology. There are significant implications for the

way we talk about the artistic process and the ways we teach art.

As an introduction to this new

territory, I will trace some of the conceptual and historical highlights of

“evolutionary computing” and “emergent behavior.” I will then review some of

the artists, designers, and research scientists who are currently working in

the field of evolutionary art and design. Finally, I will consider some of the

pedagogical implications of emergent art for our teaching practices in the

arts.

------------------------

Most natural and living systems are both productive and

adaptive. They produce new material

(e.g, blood cells, tissue, bone mass) even while adapting to a constantly

changing environment. While natural and

living systems are "productive" in the sense of creating new

"information," human-made machines that can respond with anything

more than predictable binary "yes/no" responses are a relatively

recent phenomenon. To paraphrase media artist Jim Campbell, most machines are

simply "reactive," not interactive.[4] To move beyond reactive mechanisms,

the system needs to be smart enough to produce output that is patently new—that

is, not already part of the system

"Intelligent" machines,

being developed with the aid of "neural networks" and "artificial

intelligence," can actual learn new behaviors and evolve their responses

based upon user input and environmental cues. Over time, certain properties

begin to "emerge" such as self-replication or patterns of

self-organization and control. These so-called "emergent properties"

represent the antithesis of the idea that the world is simply a collection of

facts waiting for adequate representation. The ideal system is a generative

engine that is simultaneously a producer and a product.

In Steven Johnson’s recent book, Emergence,

he offers the following explanation of emergent systems:

In

the simplest terms (emergent systems) solve problems by drawing on masses of

relatively stupid elements, rather than a single, intelligent “executive

branch.” They are bottom-up systems,

not top-down. They get their smarts

from below. In a more technical

language, they are complex adaptive systems that display emergent behavior. In these systems, agents residing on one

scale start producing behavior that lies one scale above them: ants create colonies; urbanites create

neighborhoods; simple pattern-recognition software learns how to recommend new

books. The movement from low-level

rules to higher-level sophistication is what we call emergence.[5]

In terms of the hard science underlying the concept of

emergence, it should be said that many approaches to evolutionary design,

artificial life, adaptive systems, evolutionary computation, share similar

characteristics but have distinct operating principles that are beyond the

scope of this essay to review. For the

purposes of this essay, the word “emergent” will refer to both the behaviors

of emergent systems and the metamorphosed families of objects created through

the use of evolutionary, emergent, or “artificial-life” principles.

Emergent behavior is the name given to the observed function of an

entity, and is, generally speaking, unrelated to the underlying form or

structure. These behaviors exist because of the nature of the interaction

between the parts and their environment, and cannot be determined through an

analytical reductionist approach.[6]

Novel forms generated using genetic algorithms exhibit “emergent

properties” as well. Instead of laying

the stress on the function of the object, an “artificial-life” (“a-life”)

designer will often focus on the form or changing morphology of discrete,

synthetically derived entities. To a

certain extent, early “evolutionary art” emphasized the fantastic power of

these techniques for unpredictable form generation. Later interactive and emergent artworks

have focused more on function—i.e., emergent behavior.

For evolution to occur, the system must be capable of

reproduction, inheritance, variation, and selection. Further, unlike natural

evolution, artificial evolution using evolutionary algorithms (EAs) also

require three other important features:

intitalisation, evaluation, and termination. EAs are “given a head start” by initialising (or seeding)

them with solutions that have fixed structures, behaviors, or meanings, but random

values (often generated by pseudo random number generators). Evaluation in EAs is responsible for

guiding evolution towards better solutions.

Finally, explicit termination criteria are built into the program

to halt evolution, typically when a “best fit” or acceptable solution is

achieved, or when a predetermined number of interations have been tested.[7]

Irrespective of its

particular manifestation, the key concept—the thing that makes a phenomenon

“emergent” —is that forms, self-sustaining behaviors, and meanings can evolve

over time through a simple set of rules to produce completely non-predictable

results.

History of Emergence as a Concept

The historical foundations of the

concept of “emergence” can be found in the work of John Stewart Mill in the 19th

century who, in A System of Logic (1843), argued that a combined effect

of several causes cannot be reduced to its component causes. Early 20th century proponents of

“emergent evolution” developed a notion of emergence that echoes Mill’s idea: elements interact to form a complex whole

which cannot be understood in terms of the elements; the whole has emergent

properties that are irreducible to the properties of the elements.[8]

The first real statement of the possibility of linking machines to

evolutionary principles was developed as a thought experiment by the

mathematician John Von Neumann in the late 1940s who conceived of

non-biological, kinematic self-reproducing machines.[9]

At first Von Neumann investigated mechanical devices floating in a

pond of parts that would use the parts to assemble copies of themselves. Later

he turned to a purely theoretical model. In discussions with Polish

mathematician Stanislaw Ulam the idea of cellular automata

was born. Just before his death in 1957, von Neumann designed a

two-dimensional, 27-state cellular automaton—a self replicating machine--which

carried code for constructing a copy of itself. His unpublished notes were

edited by Arthur Burks who published them in 1966 as a book, The Theory of

Self-Reproducing Automata.[10]

Other influential thinkers in the

history of emergent systems and evolutionary art include Richard Dawkins who

inadvertently founded the field of evolutionary art in 1985 when he wrote his

now famous Blind Watchmaker algorithm[11]

to illustrate the design power of Darwinian evolution. The algorithm demonstrates very effectively

how random mutation followed by non-random selection can lead to complex forms. These forms, called “biomorphs,” are visual

representations of a set of “genes.”

Each biomorph in the Blind Watchmaker applet has the following 15

genes:

- genes 1-8 control the

overall shape of the biomorph,

- gene 9 the depth of

recursion,

- genes 10-12 the color of

the biomorph,

- gene 13 the number of

segmentations,

- gene 14 the size of the

separation of the segments,

- gene 15 the shape used

to draw the biomorph (line, oval, rectangle, etc).

|

Biomorph Reserve |

||

|

|

|

|

|

|

|

|

The science of emergent behavior is closely related to the work

done using machines to model evolutionary principles in the arts. Among the

first “evolutionary artists” to understand the significance of Richard Dawkins’

work is the British sculptor, William Latham.

Initially, Latham focused on the form generation capabilities of

evolutionary systems.

Trained as a Fine Artist,

Latham’s early drawings explored the growth and mutation of images of organic-looking

shapes. In the mid 80s, he became an

IBM Fellow and began using computers to develop mutating images. This idea was

applied to other areas like architecture and financial forecasting, where

interesting mutations of scenarios could be selected and bred, with the user

acting like a plant breeder. Between

the years 1987 – 1994 at IBM, Latham established his characteristic artistic

style and began working with IBM mathematician Steven Todd. IBM’s sponsorship

of this ground breaking work led to Todd developing the “Form Grow Geometry

System” (aka FormGrow) which was designed to create the bizarre organic forms

for which the artist is known.[12]

In 1992, Latham published a book, Evolutionary Art And

Computers (with Stephen Todd)[13],

documenting the development of his extraordinary form of art. Latham set up programs on the basis

of aesthetic choices in such a way that the parameters of style and content in

the images are established but the final form is not predetermined. Much of the

time, a fixed 'final form' may never materialize. Random mutation allows the artist to

navigate through the space of the infinitely varied forms that are inherent in

his program. His early FormGrow program provided rules through which the

'life-forms' are subject to the processes of 'natural selection.' The results

of such "Darwinian evolution driven by human aesthetics" are

fantastic organisms whose morphologies metamorphose in a sequence of animated

images.[14]

In the last few years, Latham has moved beyond the creation of images

into the world of interactive gaming. One of his newest applications is a

computer game called Evolva. Released in early 2000,

Evolva enacts the process of evolution -- but it is the game warriors

themselves who evolve. Picture this scenario:

sometime in the future, the human race has mastered the art of genetic

engineering and created the ultimate Darwinian warrior -- the Genohunter. A

Genohunter kills an enemy, analyses its DNA, and then mutates, incorporating

any useful attributes—strength, speed, bionic weapons—possessed by the victim.[15]

In the area of robotics, the artist David Rokeby saw the potential

of emergent properties to mitigate the “closed determinism” of some interactive

robotic artwork several years ago. In

1996 he wrote an essay which referenced the work of robot artist, NormanWhite.

One of White's robots, Facing Out, Laying Low, interacts with its

audience and environment, but, if bored or over-stimulated, it will become

deliberately anti-social and stop interacting.

Rokeby writes:

This kind of behaviour may seem counter-productive, and

frustrating for the audience. But for White, the creation of these robots is a

quest for self-understanding. He balances self-analysis with creation,

attempting to produce autonomous creatures that mirror the kinds of behaviours

that he sees in himself. These behaviours are not necessarily willfully

programmed; they often emerge as the synergistic result of experiments with the

interactions between simple algorithmic behaviours. Just as billions of simple

water molecules work together to produce the complex behaviours of water (from

snow-flakes to fluid dynamics), combinations of simple programmed operations

can produce complex characteristics, which are called emergent properties, or

self-organizing phenomena.[16]

Interactive

Emergence

Educational technologist

Ellen Wagner defines interaction as "… reciprocal events that require at

least two objects and two actions. Interactions occur when these objects and

events mutually influence one another."[17]

High levels of “interactivity” are achieved in

human/human and human/machine couplings that enable reciprocal and mutually

transforming activity.

Interactivity—particularly the type that harnesses emergent forms of

behavior—requires that both parties—human users or machines—be engaged in

open-ended cycles of productive feedback and exchange. Beyond simply providing an on/off switch or

a menu of options leading to “canned” content, users should be able to interact

intuitively with a system in ways that produce new information. Interacting with a system that produces

emergent phenomena is what I am calling “interactive emergence.”

Concrete examples from art and technology research illustrate how

different individuals, groups, and communities are engaging in interactive

emergence—from the locally controlled parameters characteristic of the video

game and the LAN bash, to large scale interactions involving distributed collaborative

networks over the Internet. Artists and

Scientists such as Eric Zimmerman (game designer, theorist, and artist); John

Klima (artist and webgame designer); Hod Lipson and Jordan B.

Pollack (The Golem Project), Pablo Funes (computer scientist and

EvoCAD inventor); Christa Sommerer, and Laurent Mignonneau (Interactive

systems); Ken Rinaldo (Artificial Life); Yves Amu Klein (Living Sculpture); are

doing pioneering work in an area that could be called “evolutionary art and

design”. Other artists approaching

using evolutionary and emergent principles in their work include Jeffrey Ventrella (Gene Pool), The Emergent Art Lab, David Rokeby / very nervous systems, Thomas Ray, Jon McCormack, Bill

Vorn and Louis-Phillipe Demers, Simon Penny,

Erwin

Driessens and Maria Verstappen, Steven

Rooke, Nik

Gaffney, Troy

Innocent, and Ulrike Gabriel.

What differentiates the work of these artists

from more traditional practices? What

educational background, perceptual skills, and conceptual orientations are

required of the artist—and of the viewer/participant? What systems, groups, or individuals are acknowledged and

empowered by these new works?

Creating

an experience for a participant in an interactive emergent artwork must take

into account that interactions are, by definition, not "one-way"

propositions. Interaction depends on feedback loops[18]that

include not just the messages that preceded them, but also the manner in which

previous messages were reactive. When a fully interactive level is reached,

communication roles are interchangeable, and information flows across and

through intersecting fields of experience that are mutually supportive and

reciprocal. The level of success at which a given interactive system attains

optimal levels of reciprocity could offer a standard by which to critique interactive

artwork in general. Interactive

emergence could be gauged by the degree to which the parties achieved optimal

reciprocity (sex is an appropriate analogy here) along with the degree to which

the system was “productive” of new content, not predicted by the contents of

its memory.

Many

artists have developed unique attempts at interactive emergence exploring

different forms of display, user control processes, navigation actions, and

system responses. Different works have varying levels of audience participation,

different ratios of local to remote interaction, and different levels of

emergent phenomemona. Moreover, the range of artistic attempts at interactive

emergence provide us with a variety of approaches that could be used for

diverse kinds of learners in a variety of educational settings. Understanding

experiments with interactive emergence in an art context may help us to better

understand its use in pedagogical settings.

Many projects in recent years

exhibit various levels of interaction.

But the capability of exhibiting or tracking "emergent

properties" is seen by the author as a future hallmark of high level

interactive systems. With projects that enable these heightened levels of

interactivity, we may begin to see the transformation of the discrete and

instrumental character of “information” into a broad—and unpredictable--

“knowledge base” that honors the contexts and connections essential to global

understanding and exchange.

With these challenges in mind, I

would like to discuss briefly the work of an artist team, an independent

artist, an animator, and a computer scientist who are exploring “emergent

systems” in their research: Christa

Sommerer and Laurent Mignonneau, Ken Rinaldo, Karl Sims, Pablo Funes.

Christa Sommerer and Laurent Mignonneau: Interactive Plant Growing

(1993)

Austrian-born Christa Sommerer and French-born Laurent Mignonneau

teamed up in 1992, and now work at the ATR Media Integration and Communications

Research Laboratories in Kyoto, Japan.

In nearly a decade of collaborative work, Sommerer and Mignonneau have

built a number of unique virtual ecosystems, many with custom viewer/machine

interfaces. Many of their early projects allow audiences to create new plants

or creatures and influence their behavior by drawing on touch screens, sending

e-mail, moving through an installation space, or by touching real plants wired

to a computer.

Artist's

rendering of the installation showing the five pedestals with plants and the

video screen.

Interactive Plant Growing is an example of one such project. The

installation connects actual living plants, which can be touched or approached

by human viewers, to virtual plants that are grown in real-time in the

computer. In a darkened installation space, five different living plants are

placed on 5 wooden columns in front of a high-resolution video projection

screen. The plants themselves are the interface. They are in turn connected to

a computer that sends video signals from its processor to a high-resolution

video data projection system. Because the plants are essentially antennae

hard-wired into the system, they are capable of responding to differences in

the electrical potential of a viewer's body. Touching the plants or moving your

hands around them alters the signals sent through the system. Viewers can

influence and control the virtual growth of more than two dozen computer-based

plants.

Screen shot of the video projection during one interactive

session.

Viewer participation is crucial to the life of the piece. Through their

individual and collective involvement with the plants, visitors decide how the

interactions unfold and how their interactions are translated to the screen.

Viewers can control the size of the virtual plants, rotate them, modify their

appearance, change their colors, and control new positions for the same type of

plant. Interactions between a viewer's body and the living plants determine how

the virtual three-dimensional plants will develop. Five or more people can

interact at the same time with the five real plants in the installation space.

All events depend exclusively on the interactions between viewers and plants.

The artificial growing of computer-based plants is, according to the artists,

an expression of their desire to better understand the transformations and

morphogenesis of certain organisms (Sommerer et al, 1998).

Sommerer and Mignonneau: Verbarium (1999)

In a more recent project the artists have created an interactive

"text-to-form" editor available on the Internet. At their Verbarium

web site, on-line users are invited to type text messages into a small pop up

window. Each of these messages functions as a genetic code for creating a

visual three-dimensional form. An algorithm translates the genetic encoding of

text characters (i.e., letters) into design functions. The system provides a

steady flow of new images that are not pre-defined but develop in real-time

through the interaction of the user with the system. Each different message

creates a different organic form. Depending on the composition of the text, the

forms can either be simple or complex. Taken together, all text images are used

to build a collective and complex three-dimensional image. This image is a

virtual herbarium, comprised of plant forms based on the text messages of the

participants. On-line users help to not only create and develop this virtual

herbarium, but also have the option of clicking on any part of the collective

image to de-code earlier messages sent by other users.

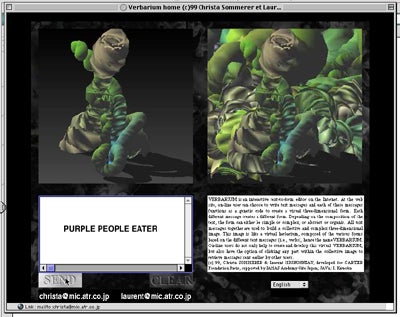

Screen

shot of the Verbarium web page showing the collaborative image created by

visitors to the site.

The

text to form algorithm translated the author’s phrase "purple people

eater" into the image at the upper left. This image

was subsequently collaged into the collective "virtual herbarium."

In the following passage, the artists discuss future goals for the

work:

We anticipate that as more users participate,

increasingly complex image structures will emerge over time…While our prototype

system succeeds in modeling some of the features of complex system, future

updates of the systems should include the modeling of genetic exchange of

information (text characters) between forms, creating offspring forms through standard

genetic crossover and mutation operations as we have used them in the past. The

potential benefit of such an extended system will be the expansion of

diversity, the reaction to neighbors and to external control, exploration of

their options, and replication, basically the remaining features that are

commonly associated with complex adaptive systems, as described by Crutchfield.

Another further update of the system should also include the capacity to

simultaneously display all messages in the browser's window; this should make

it possible for users to retrieve all messages ever sent and to follow the

whole evolution of interaction history.[19]

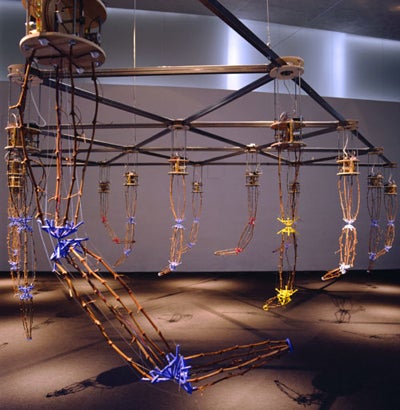

Ken Rinaldo: Autopoiesis (2000)

Overview

of all fifteen robotic arms of the Autopoiesis installation.

Photo credit: Yehia Eweis.

A work by American artist Ken Rinaldo was recently exhibited in

Finland as part of "Outoaly, the Alien Intelligence Exhibition 2000,"

curated by media theorist Erkki Huhtamo.[20] Rinaldo, who has a background in both

computer science and art, is pursuing projects influenced by current theories

on living systems and artificial life. He is seeking what he calls an

"integration of organic and electro-mechanical elements" that point

to a "co-evolution between living and evolving technological

material."

Rinaldo's contribution to the Finnish exhibition was an installation entitled Autopoiesis[21],

which translates literally as "self making." The work is a

computer-based installation consisting of fifteen robotic sound sculptures that

interact with the public and modify their behaviors over time. These behaviors

change based on feedback from infrared sensors that determine the presence of

the participant/viewers in the exhibition, and the communication between each

separate sculpture.

The series of robotic sculptures--mechanical arms that are suspended from an

overhead grid--"talk" with each other (exchange audio messages)

through a computer network and audible telephone tones. The interactivity

engages the participants who in turn affect the system's evolution and

emergence. This interaction, according to the artist, creates a system

evolution as well as an overall group sculptural aesthetic. The project

presents an interactive environment that is immersive, detailed, and able to

evolve in real time by utilizing feedback and interaction from audience

members.

Karl Sims

One of the most

innovative creators of “artificial life” is Karl Sims. Sims’s cross

disciplinary background at MIT in Biology and computers gave him insights into

how computers could be used to model genetic systems that evolved

"creatures" -- objects with bodies and the capacity to develop

evolving intelligent behaviors over time. In earlier experiments with growing

"plants," he specified instructions for evolving the development of

the organisms. With his creatures, he

added movements and intelligent behaviors that could also evolve. Removing

himself from the selection of the "fittest" from one generation to

the next, Sims established goals, then let the computer determine how well

offspring in a generation met the fitness criteria, and produce the next

generation.

In an interview with

the late visionary theorist, Steven Holtzman,

Sims stated: "I've always been interested in getting computers to

do the work. While I worked in Hollywood, I was doing animations with details

that no one would ever want to do by hand. For example, I used a computer to

create an animated waterfall, when I could never have designed each drop of

water for each frame in the animation. Instead, I created it with a completely

procedural method." -- that is, he created a general description of the

waterfall and then let the computer generate the waterfall itself.

Sims soon began

playing with relationships between his creatures. Holtzman described one such

project in detail:

… competing creatures were positioned at opposite ends of an open

space and a single cube was placed in the middle with the goal of creating

competition between the two creatures. Whichever creature got to and maintained

control over the cube first won. What was most interesting was how the species

developed strategies to counter an opponent's behavior. Some creatures learned

to push their opponent away from the cube, while others moved the cube away

from their opponents. One of the more humorous approaches was a large creature

that would simply fall on top of the cube and cover it up, so its opponent

couldn't get to it. Some counterstrategies took advantage of a specific

weakness in the original strategy but could be foiled easily in a few

generations by adaptations in the original strategy. Others permanently

defeated the original strategy.[22]

The power of Sims's

artificial evolution is its ability to come up with solutions we couldn't

otherwise imagine. Sims states:

"When you witness the process, you get to see how things slowly evolve,

then quickly evolve, get stuck, and then get going again. Mutation, selection,

mutation, selection, mutation, selection -- the process represents the ability

to surpass the complexity that we can handle with traditional design

techniques. Using the computer, you can go past the complexity we could

otherwise handle; you can go beyond equations we can even understand."

Sims’s work explores both the concept of evolving emergent “forms” as well as

emergent behaviors.[23]

In more recent work, Sims has introduced the possibility for

audience members to interact with the organisms of his world. In Galapagos, Computer simulated organisms

in abstract forms display themselves on twelve monitors. Participants select an

organism and consciously choose to let it continue to exist, copulate, mutate

and reproduce itself by pressing sensor- equipped foot pedals located in front

of the monitors. This is a work in which virtual "organisms" undergo

an interactive Darwinian evolution.

Galapagos (1997)

The process in this

exhibit is a collaboration between human and machine. The visitors provide the

aesthetic information by selecting which animated forms are most interesting,

and the computers provide the ability to simulate the genetics, growth, and

behavior of the virtual organisms. But the results can potentially surpass what

either human or machine could produce alone. Although the aesthetics of the

participants determine the results, they are not designing in the traditional

sense. They are rather using selective breeding to explore the

"hyperspace" of possible organisms in this simulated genetic system.

Since the genetic codes and complexity of the results are managed by the

computer, the results are not constrained by the limits of human design ability

or understanding.[24]

Pablo Funes: EvoCAD

According to

computer scientist Pablo Funes, the new field of Evolutionary Design may open up an unexpected

creative role for the computer in CAD (computer aided design). In a CAD system designed using evolutionary

design principles, Funes maintains that

“not only can designs can be drawn (as in CAD), or drawn and simulated

(as in CAD+simulation), but (they can also be) designed by the computer following

guidelines given by the operator.” His

EvoCAD program successfully combines the theory of evolutionary design with the

practical outcomes associated with CAD.

In its initial

iteration, the EvoCAD system takes the form of a mini-CAD system to design 2D

Lego structures. Some success has also been demonstrated with fully 3D Lego

structures (see “Table” structure below, fig. B). His application allows the user to manipulate Lego structures,

and test their gravitational resistance using a simplified structural

simulator. It also interfaces to an evolutionary algorithm that combines

user-defined goals with simulation to evolve possible solutions for user-defined

design problems. The results of the evolutionary process set in motion are sent

back to the CAD front-end to allow for further re-design until the desired

solution is obtained.

Boiled

down to its basics, the process combines a particular genetic representation

with various fitness functions in order to create physical simulations that

“solve” hypothetical problems. These elements are in turn regulated by a “plain

steady-state” genetic algorithm. Funes describes the process of meeting certain

design objectives as follows:

To

begin an evolutionary run, a starting structure is first received, consisting

of one or more bricks, and "reverse-compiled" into a genetic

representation that will seed the population. Mutation and crossover operators

are applied iteratively to grow and evolve a population of structures. The

simulator is run on each new structure to test for stability and load support,

needed to calculate a fitness value. The simulation stops when all objectives

are satisfied or when a timeout occurs.[25]

This

set of techniques allows Funes to create various evolving structures in

simulated form. By altering the fitness functions, Fune’s team has successfully

evolved and built many different structures, such as bridges, cantilevers,

cranes, trees and tables. While these

are not intended as “art” per se, they are highly expressive and

non-predictable structures that bring a new twist to the old adage, “form

follows function.” They provide an

important benchmark for artists and designers interested in using evolutionary

design principles in realizing a new class of graphic and sculptural objects.

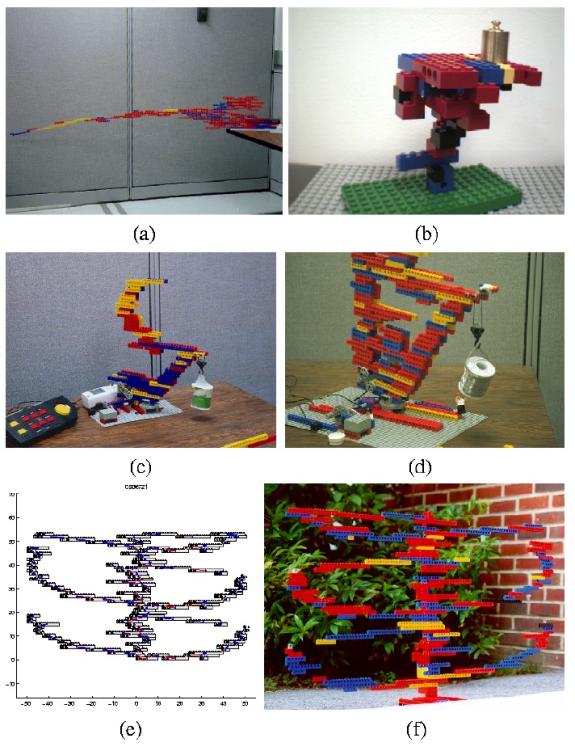

Pablo Funes, Evolved Lego structures:

cantilevered bridge (a); table, an example of 3D evolution (b); crane, early

and final stages (c, d); tree, internal representation and built structure (e,

f)

IMPLICATIONS FOR ART AND EDUCATION

The pendulum has

swung to a conservative extreme of late with a reemphasis of high stakes

testing and the development of curricular “standards” for educational policy

makers. This is not the place to

develop an argument against standards in schools; suffice it to say that

demonstrations of competencies resulting from “schooling” are a far cry from

real learning. (footnote: for persuasive

essay on what’s wrong with standards in schools see Elliot Eisner. (1998).[26] Learning is an evolving process that, at its

essence, is proven by the ability of the learner to apply received knowledge in

multiple and unrelated settings.

Demonstrating this level of competency involves “knowledge transfer”—the

concept of transfer being an effective measure of real knowledge—and

significant interaction with content, teachers, peers, and discipline

specialists.

Among the quotations at the lead of this essay is one by Humberto

Maturana, Biologist,

Cybernetician, Scientist – and the creator of the theory of autopoiesis--the concept of continual self renewal (see

also Rinaldo above). For Maturana, autopoiesis

is the process by which an organism continously reorganizes its own

structure.. This has implications for

not only how we interact with our environment, but how we learn.

Maturana wrote in

1980 that:

"Learning is not a process of accumulation of representations

of the environment; it is a continuous process of transformation of behavior

through continuous change in the capacity of the nervous system to synthesize

it. Recall does not depend on the indefinite retention of a structural

invariant that represents an entity (an idea, image or symbol), but on the

functional ability of the system to create, when certain recurrent demands are

given, a behavior that satisfies the recurrent demands or that the observer

would class as a reenacting of a previous one."[27]

Put another way,

learning is not about fixed reactions to a static environment, but about the

ability of the organism to evolve behaviors that meet the challenges of a

constantly changing environment.

Is it possible for

artists and educators to provide students with rich, evolving

content—translated into “living curricula” that not only convey essential

skills for success, but evolve to meet the ever changing needs of students

attempting to develop higher order cognitive skills that can transfer into a

variety of settings?

Imagine the ability

to interact with a system that not only provided a real time mirror of

performance levels (the cognitive equivalent to a computerized rowing machine),

but also provided timely feedback. Now

tie this vision of a “smart system” into a distributed network of “learning

nodes” that harnessed the power of multiple CPUs and provided opportunities for

interactions among affiliates of “learning communities”. John Laird, at the University of Michigan,

an expert in the intersection of artificial life and gaming, has proposed new

avenues for gaming that may provide part of the answer. Laird writes:

Although

we have found computer games to be a rich environment for research on

human-level behavior representation, we do not believe that the future of AI in

games lies in creating more and more realistic arenas for violence. Better AI

in games has the potential for creating new game types in which social

interactions,not violence, dominate. The Sims9 provides an excellent example of

how social interactions can be the basis for an engaging game. Thus, we are

pursuing further research within the context of creating computer games that

emphasizes the drama that arises from social interactions between

humans

and computer characters.[28]

And another example, again referencing artists Christa

Sommerer and Laurent Mignonneau who have developed a number of projects

expressly for the internet. They

write:

The Internet nowadays contains more than a

billion documents, and the amount of text, image and sound data increases by

the minute. One could even argue that the Internet itself is one of the best

examples of a complex system. It provides an ideal platform for knowledge

discovery, data mining and data retrieval and systems that make use of a

dynamic and constantly evolving data base.[29]

In both the localized computer

installations and web-based projects realized by Sommerer and Mignonneau, the

interaction between multiple participants operating through a common interface

represents a reversal of the topology of information dissemination. The pieces

are enabled and realized through the collaboration of many participants

remotely connected by a computer network.

In an educational setting, this

heightened sense of interaction needs to be understood as crucial. Students and

instructors alike are capable of both sending and receiving messages across a

myriad of pathways. Many

educators continue to be stuck in a method of teaching that echoes the

structure of the one-way "broadcast"--a concept that harks back to

broadcast media such as radio. In the typical lecture the teacher as "source"

transmits information to passive "receivers." This notion of a

"one-to-many" model that reinforces a traditional hierarchical

top-down approach to teaching is at odds with truly democratic exchange. Everyone is a transmitter and a receiver, a publisher and a

consumer. In the new information ecology, traditional roles may become

reversed--or abandoned. Audience members become active agents in the creation

of the new networked/artwork learning community. Teachers spend more time

facilitating and "receiving" information than lecturing. Students

exchange information with their peers and become adept at disseminating

knowledge. Participant/learners

interacting with such systems are challenged to understand that cognition is

less a matter of absorbing ready made "truths" and more a matter of

finding meaning through iterative cycles of inquiry and interaction.

Ironically, this may be what good teaching has always done.

So would we be justified in building a

"machine for learning" that does essentially the same thing that good

teachers do? One argument is that by designing such systems we are forced to

look critically at the current manner in which information is generated,

shared, and evaluated. Further, important questions are surfaced such as

"who can participate"; "who has access to the information;"

and "what kinds of interactions are enabled?" The traditional

"machine for learning" (the classroom) with its single privileged

source of authority (the teacher) is hardly a perfect model. Most of the time,

it is not a system that rewards boundary breaking, the broad sharing of

information, or the generation of new ideas. It IS a system that, in general,

reinforces the status quo. Intelligent machines such as Rinaldo's Autopoiesis,

or Sommerer’s and Mignonneau’s Verbarium can help us to draw connections

between multiple forms of inquiry, enable new kinds of interactions between

disparate users, and increase a sense of personal agency and self-worth. While

intelligent machines will surely be no smarter than their programmers,

pedagogical models can be more easily shared and replicated. Curricula

(programs for interactions) can be modified or expanded to meet the special

demands of particular disciplines or contexts. Most importantly, users are free

to interact through the system in ways that are suited to particular learning

styles, personal choices, or physical needs.

Conclusion

The power of the

networked computing coupled with our understanding of emergent systems and

evolutionary computing holds real promise.

The

unpredictable nature of the outcomes provides an ideational basis for both art

making and art teaching that is less deterministic, less bound in inherited

style and method, less totalizing in its aesthetic vision and, perhaps, less

about the ego of the individual artist/teacher. In addition to the mastery of materials and harnessing the powers

of the imagination that we expect of the professional artist, our new breed of

artist—call her an evolutionary—is equally adept at developing new

algorithms, envisioning useful and beautiful interfaces, and

managing/collaborating with machines and/or humans exhibiting non-deterministic

and emergent behaviors. Like a

horticulturalist who optimizes growing conditions for particular species but is

alert to the potential beauty of mutations in evolutionary strains, the evolutionary

works to prepare and optimize the conditions for conceptually engaging and

aesthetic outcomes. In order to do

this, this new breed of artist must have a fuller understanding of

interactivity, an healthy appreciation of evolutionary theory, and a gift for

setting into motion emergent behavior.

Because what we are

doing is modeling processes and behaviors that more closely approximate the

complexity of “real life”—seen as such, we put ourselves in a position of

appreciation rather than agents of domination and control. Interacting in collaboration with our

environment and seeking out unexpected outcomes through systems of emergence

provide new models for living on a tightly packed but richly diverse planet.

There is no question that the uses

of technology outlined here need to be held against the darker realities of

life in a hi-tech society. The insidious nature of surveillance and control,

the assault on personal space and privacy, the commodification of aesthetic

experience, and the ever-widening "digital divide" between the

technological “haves and have nots” are constant reminders that technology is a

double edged sword.

But there is at least an equal chance that a clearer understanding of the

concepts of interaction, evolution, and emergence—enabled by technology—will

yield a broader palette of choices from which human beings can come together to

create meaning. In watching these processes unfold, educators will surely find

new models for learning.

References and Notes